This is backwards, and the example is clearly an edge-case.

It’s difficult to reason about without having something to point at.

You say Collectors (edit: I mean Instances) will get heavy, I say they won’t.

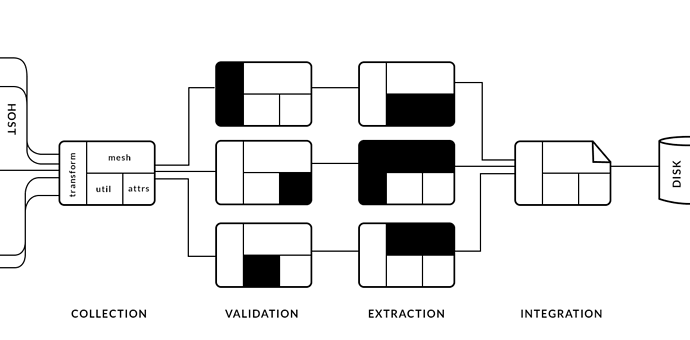

You say collecting a lot of information makes for one complex Collector, I say collecting information in Validators makes for many complex Validators, and not to mention a clash of responsibility, meaning it will add cognitive load and take longer to comprehend.

Complexity needs a home. I’m saying we put it where it is expected, not spread it out, and allow business logic to be as simple as can be. Business logic is what makes a pipeline, the rest is technicalities.

In other news, I’ve encountered a problem in our current design for associating an asset with a family.

Currently, the current working file is used to determine a family, and the family is then used to determine where to publish.

This means we can only ever publish a single family from a scene. In the case of ben model, we currently have two; one for the mesh, and one for the quicktime and gif, that both need to go into the final published directory, but would at this point produce different unique paths.

To solve this, we could instead of basing an output path on the family, base it on the task, such as example modeling.

This way, modeling determines how files are initially created via be and finally published via pyblish. The contained instances within the scene then just follow along for the ride. Symmetry is a good thing.

In that sense it would be great if a Validator could ensure nodes in the instance are actually of use. Figuring out which nodes are actually of use still stays tricky.

In that sense it would be great if a Validator could ensure nodes in the instance are actually of use. Figuring out which nodes are actually of use still stays tricky.