i used the holidays to work on the pyblish plugin manager, and finished the MVP.

using it is simple:

1. create a pipeline config

create a json file: {[plugin_name]:plugin_settings, …}

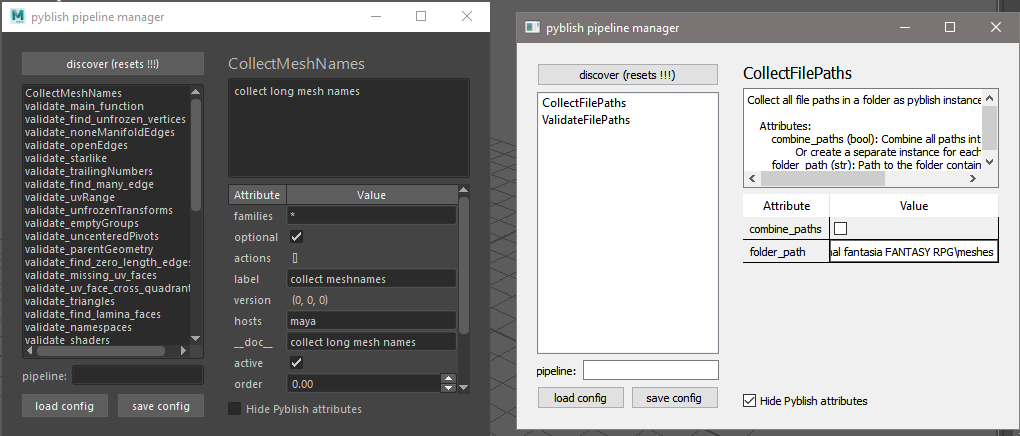

or you can use the GUI tool i made for this.

2. apply a pipeline config

register plugins like normal, then register the config. (which is a pyblish plugin filter)

from pyblish_config.config import register_config_filter

register_config_filter('sample_config_folder.json')

import pyblish_lite

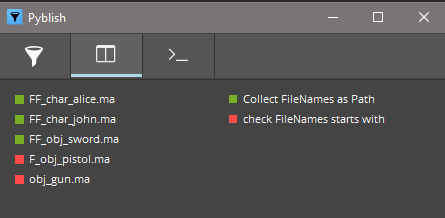

pyblish_lite.show()

your plugin settings are now applied, explitely.

the implementation uses pyblish filters, and compliments all current pyblish features.

no re-architecturing is needed

most time was spent on making the config creator intuitive/ userfriendly.

the code currently lives outside of pyblish base repo. we could bring the load config function into pyblish base. (a single function)

all other code is related to the config creator which like any pyblish UI has it’s own repo.

there likely will be overlap between the config creator, and the plugin manager (think plugin marketplace, where you read descriptions of public plugins)

for now i want to focus solely on explicit plugin management, so pretend the marketplace doesnt exist yet.

)

)

I’m cautiously optimisting about the idea of “converters”, it does seem like a steep hill to climb. Is node paths the only thing of interest? What about PyMEL attributes or data types like matrices etc? I’ve even heard of some using the MASH Python library, which is apparently a thing.

I’m cautiously optimisting about the idea of “converters”, it does seem like a steep hill to climb. Is node paths the only thing of interest? What about PyMEL attributes or data types like matrices etc? I’ve even heard of some using the MASH Python library, which is apparently a thing.